Imagine looking at a high school transcript. Perhaps you are a high school student, or the parent of one, or a college admissions officer. The transcript shows a B+ in biology. What does this mean? Presumably, it means that the student has a B+ level grasp of biology. But it could also mean—could it not?—that the student's grasp of biology is higher than B+ but deficiencies in writing ability or data visualization or the discipline of turning work in on time etc. lowered the grade. Those ancillary skills may have also been probed in other courses, but the results are buried, invisible. This is ironic because their broad application suggests that they may be more important to the lives of more students than the biology content.

There are at least three problems here. First, a curricular problem: academia has long operated in disciplinary silos, neglecting broadly applicable interdisciplinary skills. Second, an assessment problem: even if such skills are relevant to multiple disciplines, they are rarely defined or assessed. No one owns them. Third, a reporting problem: transcripts that show holistic grades do not provide students or external audiences a clear picture of student ability. The B+ in biology prompts no specific action for the student and is ambiguous to the readers of the transcript.

With that framing, the remedy seems clear enough:

Step 1. Define interdisciplinary learning outcomes

Step 2. Teach and assess outcomes across courses

Step 3. Design reports that present a clearer picture of student ability

Below, I'll expand on that outline, describing a process that you can use to improve your own school's work, and I'll show how we implemented it in the Minerva Baccalaureate program. Along the way, I'll also explain additional steps we took to promote knowledge transfer, i.e., students' ability to apply their knowledge and skill in diverse ways and contexts.

Step 1: Defining the outcomes

The first step is to distinguish disciplinary from interdisciplinary learning outcomes and explicitly describe both. (By "learning outcome" I mean a measurable aspect of student knowledge or ability. At Minerva, we use hashtags to help name individual learning outcomes, as described below.) Single-discipline knowledge is still relevant; it's just not the whole story. I won't discuss it much here because even if single-discipline learning outcomes aren't articulated or tracked very well, they are already the foundation of most high school curricula, whereas interdisciplinary outcomes are not.

A heuristic I recommend is that an outcome is interdisciplinary if it has plausible application in 60% or more of the curriculum. For example, data visualization is clearly relevant in science, math, and social studies. Maybe language arts too, if done creatively. That's at least three of the four traditional high school subjects, so a learning outcome about data visualization skills (#dataviz) deserves promotion to interdisciplinarity. In another realm, the use of evidence to support an argument is applicable in almost every subject, so in the Minerva Baccalaureate, the learning outcome we call #evidencebased is also an interdisciplinary outcome.

There is no one right answer regarding how many learning outcomes you define in this way, but you'll soon wonder how to determine whether you've gotten them all. Let me save you some trouble: you haven't. No matter how much time you spend on this step, you will not exhaust or fully structure the entire space of human cognition. Just remember that you are starting from a position in which, very likely, no interdisciplinary skills have been articulated or monitored. Begin by constructing a high-level framework aligned with your institutional mission and educational philosophy, recruit a diverse team, and call the result version 1.0. As students produce assessable work, your instructors will notice gaps—skills and ideas that students need but which don't line up naturally with any of the outcomes you've yet defined. Incorporating those will lead you to version 1.1. There will be version management challenges, but not insurmountable ones.

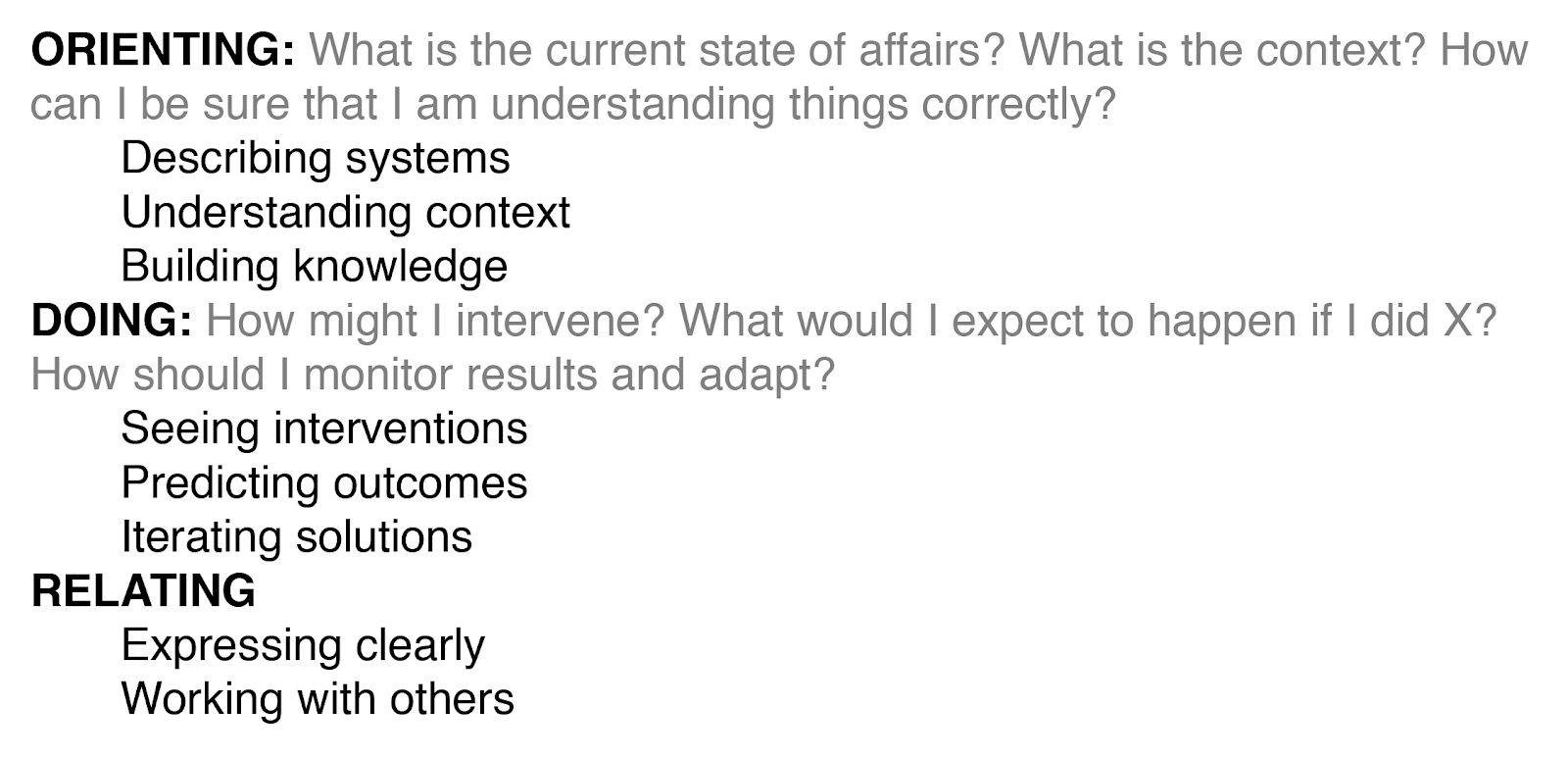

Minerva's organizational mission centers on the practical application of knowledge, so this process yielded a set of learning outcomes centered on problem solving. Each learning outcome has a one-paragraph description and a grading rubric, and is explicitly introduced to students in class. (Which class introduces them doesn't really matter—they will reappear many times.) Once these were developed, we grouped and organized them into the following hierarchy of competencies—of course, yours will almost certainly differ.

As you work through the outcomes definition process, you may also confront questions related to assessment volume: How many outcomes can your instructors assess? How often? How much feedback can students meaningfully respond to? Broad outcomes will arise often and present many opportunities for students to learn, but if they are too broad, feedback will be too vague to be actionable. Thus, an outcome like #writing is likely too broad. On the other hand, very narrow outcomes like #verbconjugation will arise too infrequently and probably be seen as nitpicky and unimportant to external audiences. Choose a middle road—the Minerva Baccalaureate uses #composition, #thesis, and a handful of others—with outcomes that are directly evaluable and as independent of each other as possible.

Step 2: Teaching and assessing in multiple contexts

With that organized set of outcomes in hand, the next step is to create opportunities in every course—perhaps even in extracurricular work—for students to apply them. I emphasize here that you need not build all-new courses. You can instead find ways to deepen and extend the core subjects—science, social studies, language arts, and math—to make contact with your new suite of interdisciplinary outcomes. You don't have to pack all the outcomes into one course or one year, because your goal should be to make contact with them not just across courses, but over time. Years. Why? Formative assessment.

The quiz and exam scores that form the basis of so many traditional course grades represent student ability at one moment. Students cram for these high-stakes assessments and soon forget much of what they memorized. Even when they aren't forgotten, memories formed this way are likely to be compartmentalized, disconnected just-so stories. So the challenge is to build courses, assignments, and lesson plans that allow interdisciplinary outcomes to appear and reappear in diverse settings, not just as interlopers but as recurring major characters, alongside the marquee disciplinary content. The goal is to make students think, correctly, "Hm! This idea seems to pop up everywhere. Whatever I end up studying or doing, I'm going to need to have some skill with it."

I said earlier that the Minerva Baccalaureate is designed to promote knowledge transfer. Most people understand this to refer to application across knowledge domains, and indeed (as I'll explain further in the next section) we monitor students' ability to apply learning outcomes across courses. It may, however, be useful to think of knowledge transfer as being about a more thoroughgoing kind of generalizability. If a student can meaningfully transport a concept or skill to new situations and apply it well, then they have transferred it. Doing this presupposes that they recognize, in the first place, that the concept is applicable in the new situation. It may also involve the distinction between analysis and synthesis—or, if you like, critique and production. It is one thing to dissect someone else's claims and ideas; it is something else to recognize limitations in our own thinking. Even time itself can be thought of as a dimension for knowledge transfer, as can the modality of expression (e.g., writing or speaking).

Considerations like these led us to an approach in which we use rubric-based scores—each one on a specific learning outcome—to represent the raw measurements of student ability, and weights attached to those scores to represent both the importance of a score as well as contextual information about several dimensions of knowledge transfer.

.png)

Rubric-based scoring is a best practice in general: it helps align student and teacher expectations about what constitutes good work, and helps ensure that grading practices are consistent from teacher to teacher. This practice is improved even further by grading on specific, clearly articulated learning outcomes. Finally, this weighting mechanism allows us to represent the importance of different assignments, the level of difficulty the student had to tackle to get the score, and the recency of the score.

Step 3: Designing reports

Before this final step, let's take stock. By working through the previous steps, we / you have defined interdisciplinary outcomes and found ways to help students practice them in diverse contexts. We are tracking the courses in which these outcomes are assessed to illuminate knowledge transfer: the more courses in which a student successfully applies a learning outcome, the better they are at transferring it. In the Minerva Baccalaureate, we also track details such as whether the student recognized spontaneously that a learning outcome was relevant; whether their application involved critiquing another person's work or producing original work, etc. You may have other relevant contextual information leading to rich, multi-dimensional data. Our final task, therefore, is to present this information in a way that helps students identify areas for growth, and helps external readers understand each student's academic record.

One approach that achieves both these ends is a mastery-based transcript, such as those enabled by the Mastery Transcript Consortium. I strongly endorse that approach for its flexibility and support for competencies that go beyond siloed disciplinary knowledge. If you are considering concrete steps to improve upon your school's transcript, take a close look. Schools implementing the Minerva Baccalaureate program can do this... but we've gone even further. Because there are so many potential ways of presenting information like this, I'll shift now to just explaining our solution.

Every month, we generate a progress report for each student. Everything in the report is based on the same underlying data: a collection of scores on individual learning outcomes. As described above, each of these scores occurs in a specific context. By the time a student finishes the program, they will have more than a thousand of these micro-assessments. We slice and dice this rich dataset in different ways to help each student understand different facets of their learning journey.

The report opens with a high-level summary of their performance so far. The most holistic measure is the diploma score; students and parents (and, ultimately, college admissions staff) looking for a single summary statistic representing student achievement look here. The diploma score is the sum of three components. The first represents interdisciplinary breadth or scope; it corresponds, roughly, to how many interdisciplinary learning outcomes a student has applied across courses. The second component reflects how high (or low) they scored on those same outcomes. The third and last component represents how high (or low) they scored on disciplinary outcomes.

.png)

To show student progress over time, the report includes a plot showing the diploma score over the entire length of the program, making it clear to students that this is a long-term enterprise—one that will present many opportunities to practice their skills with each learning outcome. The diploma score and its components are continually updated throughout that three-year period, and the weights' temporal decay means that recent scores tend to have a greater bearing. This helps students focus on steady practice and growth rather than rushed cramming.

Summary measures like the diploma score and its components are helpful for understanding the big picture, but for more detailed guidance about the specific areas of strength or weakness, the report next shows a series of tables presenting the student's performance within each competency. Recall that each competency is a group of learning outcomes. For example:

.png)

The row title (Describing systems) shows the competency, taken from the hierarchy I presented earlier. The colored boxes to the right show the student's weighted mean score on the learning outcomes (#observation, etc.) in that competency. Each column represents the average score for all five outcomes... but only for courses in one track. Our sample student has just begun year 2 (see the progress plot in the previous figure), which means that in the science track, they have completed the biology course (in year 1) and are currently taking chemistry (year 2). This table reveals a 3.3 average score for this set of learning outcomes as assessed in biology and chemistry. No scores have been awarded yet for these outcomes in the mathematics track. Because of the way we calculate interdisciplinary breadth, this represents an opportunity for growth, as does the low score in the language arts track.

The final display in the progress report presents details of a different kind, namely, the student's encounters with various types of knowledge transfer. This view collapses across all learning outcomes and courses—it's meant to illuminate styles of application and complement the contentful measures presented in previous tables.

.png)

In the first column of this table, the source of the score corresponds to the type of student work that was assessed. In addition to assignments, in-class polls can be scored, as can spoken contributions. Minerva's Forum platform allows instructors to review and assess class videos, providing not just scores but also narrative feedback in the same way as they would with a written assignment. The other columns of the table show the other dimensions of knowledge transfer mentioned earlier. Students can use these to identify areas for growth, albeit in a way somewhat different than the previous tables. In this example, the student should think about why they are scoring low on unprompted applications in assignments. Since that result pertains to an average across all learning outcomes and courses, it may represent something important not captured by more conventional performance measures.

Over the span of three years, students may receive ~50 scores on each of the 30+ interdisciplinary learning outcomes, spread across disciplinary tracks and the various combinations of knowledge transfer contexts. This rich dataset presents unique opportunities for monitoring student learning; for sharing academic progress with parents, teachers, and other audiences; and even for evaluating program efficacy. And for the purposes of this essay, it offers a plausible remedy for all three of the problems associated with the opening scenario of a minimally-informative B+ in biology.

Conclusion

Despite the entrenchment of traditional high school curricula, pedagogy, and assessment, innovation is possible. You may gaze wistfully at schools with the freedom to overhaul everything, plunging into pure project-based learning, discovery learning, learning contracts, community-based learning, and so on—but you need not go so far. You can satisfy the constraints of disciplinary standards and still find ways to reach beyond them, delivering exceptional interdisciplinary learning experiences and illuminating student growth and competence in ways much better than traditional measures.